About Me

I am working towards AI that unifies all physical sensors into one body of perception — pushing machine understanding beyond the boundaries of human perception.

I am currently a postdoctoral researcher at the Cyber Physical Systems (CPS) group at University of Oxford under the supervision of Profs. Niki Trigoni and Andrew Markham. My research builds AI systems that unify diverse physical sensors—RGB, LiDAR, radar, thermal, and event cameras—into robust perception systems that extend how we understand the physical world.

I obtained my D.Phil. (PhD) in Computer Science at the University of Oxford, co-supervised by Profs. Niki Trigoni and Andrew Markham in the CPS group. My study was generously supported by the Global Korea Scholarship (GKS) Program and ACE-OPS grant. Prior to my D.Phil., I worked as a research associate supervised by Prof. Yong-Guk Kim at Sejong University, South Korea, where I completed my master's and undergraduate courses in Computer Science.

Research Interests

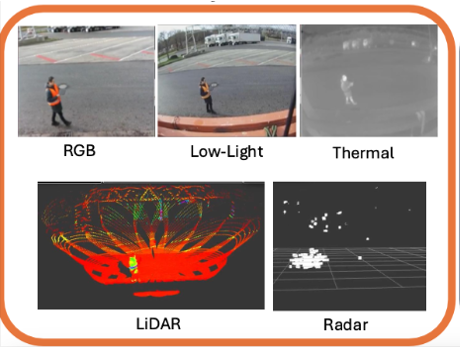

The physical world is rich with information invisible to human senses — heat, radar reflections, microsecond dynamics. My research advances multi-modal and multi-view sensing with diverse physical sensors, including RGB-D cameras, LiDAR, mmWave radar, thermal cameras, and event cameras. A key challenge in real-world settings is achieving reliable long-term localization of these sensors in a shared reference space, enabling accurate multi-view fusion while leveraging the unique capabilities of each modality.

Beyond localization, I am interested in how foundation models can develop reasoning abilities across modalities—understanding the complementary strengths of each sensor type and dynamically selecting and combining the most informative modalities for a given task. This supports robust, context-aware performance on downstream applications, including detection, tracking, re-identification, anomaly detection, and more.

Ultimately, my work seeks to bridge physical sensing with adaptive multi-modal learning, building perception systems that are accurate, resilient, and capable of operating reliably in complex real-world environments.

Research Platform

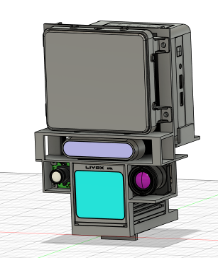

We have designed and built Frankenstein, a multi-modal sensing platform that integrates diverse physical sensors—including RGB-D cameras, LiDAR, mmWave radar, and event cameras—into a unified system. The goal is to enable robust, long-term perception in complex real-world environments by leveraging the complementary strengths of each modality.

By co-registering and fusing data from these heterogeneous sensors, the platform supports research in cross-domain generalization, multi-view localization, and adaptive modality fusion for various essential downstream tasks in ML.

Using four synchronised Frankenstein units, we have recorded long-term, large-scale multi-view, multi-modal datasets from a wide range of domains such as campus, industrial, and wildlife environments, capturing diverse real-world conditions that unimodal perception cannot address.

Ongoing Research

Multi-Modal Localization with Thermal, RGB, and mmWave Radar

Leveraging our multi-modal sensing platform (Frankenstein), this project explores robust localization by fusing thermal imaging, RGB cameras, and mmWave radar. By combining these complementary modalities, the system achieves reliable pose estimation across challenging conditions such as poor lighting, adverse weather, and visually degraded environments.

This work demonstrates that multi-modal fusion is essential for reliable localization — a core building block toward a foundation model that reasons across all sensor modalities.

Extended Perception via Multi-Modal Fusion

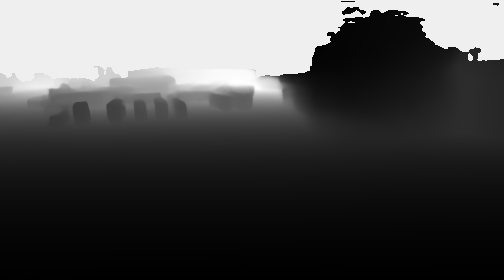

In the real world, there are many circumstances where unimodal models fail significantly. For example, rain and water droplets on camera lenses severely degrade RGB-based perception, causing even state-of-the-art monocular depth estimation algorithms to fail. In safety-critical applications, this creates dangerous blind spots. This project investigates how complementary modalities can compensate for vision degradation and overcome unimodal limitations, enabling reliable perception under all weather conditions.

This work shows why multi-modal perception is not optional but essential — a core motivation for building an engine where AI reasons about which sensors to trust and how to fuse them.

Unimodal Enhancement

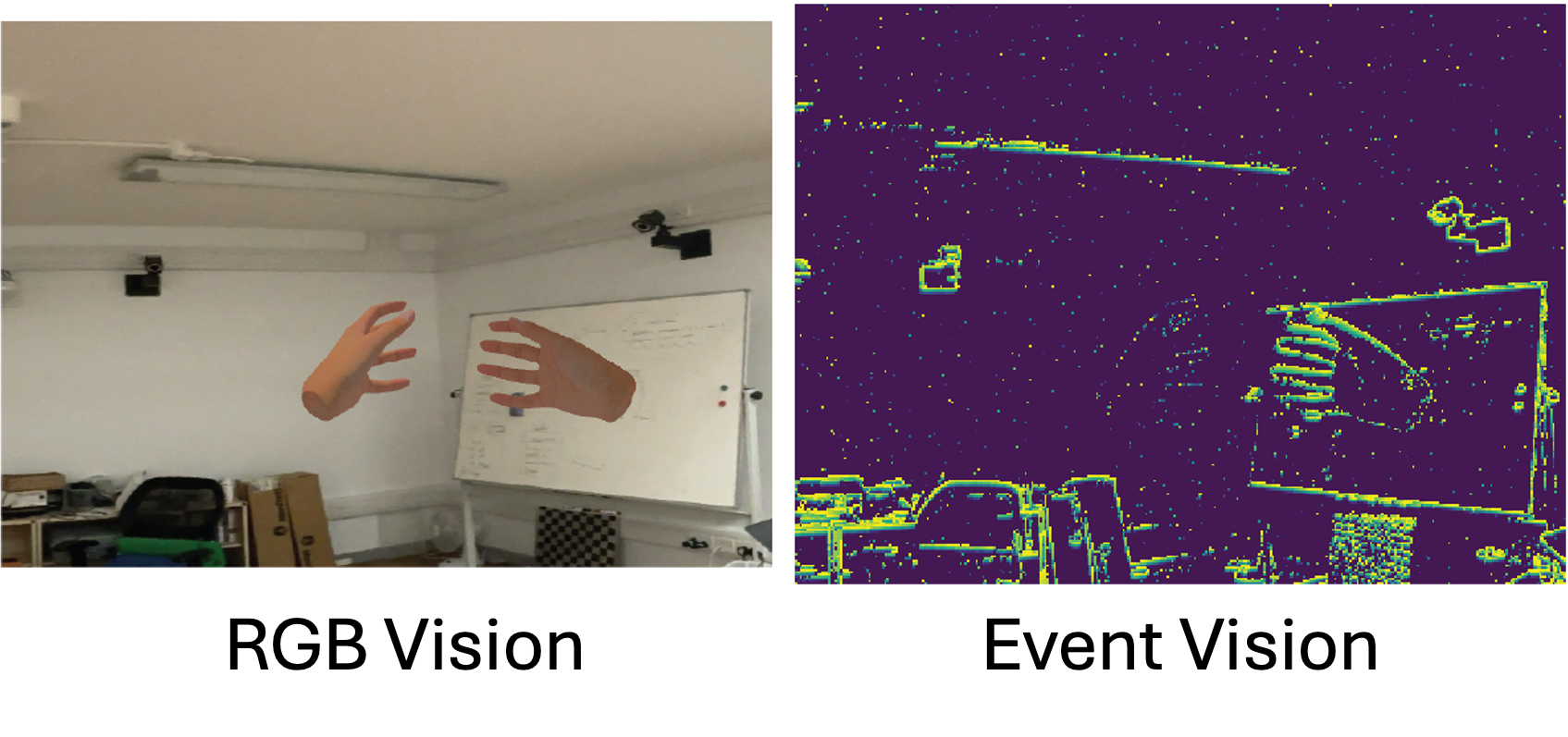

While RGB-based perception has been extensively studied, many other modalities that can address the limitations of RGB remain under-explored. For example, mmWave radar and event cameras offer essential complementary information. Radar provides robust sensing in adverse weather and lighting conditions, while event cameras capture high-temporal-resolution motion with minimal latency. Enhancing the individual capabilities of these under-explored modalities extends the perception capability of all downstream intelligent systems.

Advancing each modality individually is a prerequisite for effective fusion — the engine must understand what each sensor can uniquely contribute before it can reason about how to combine them.

News

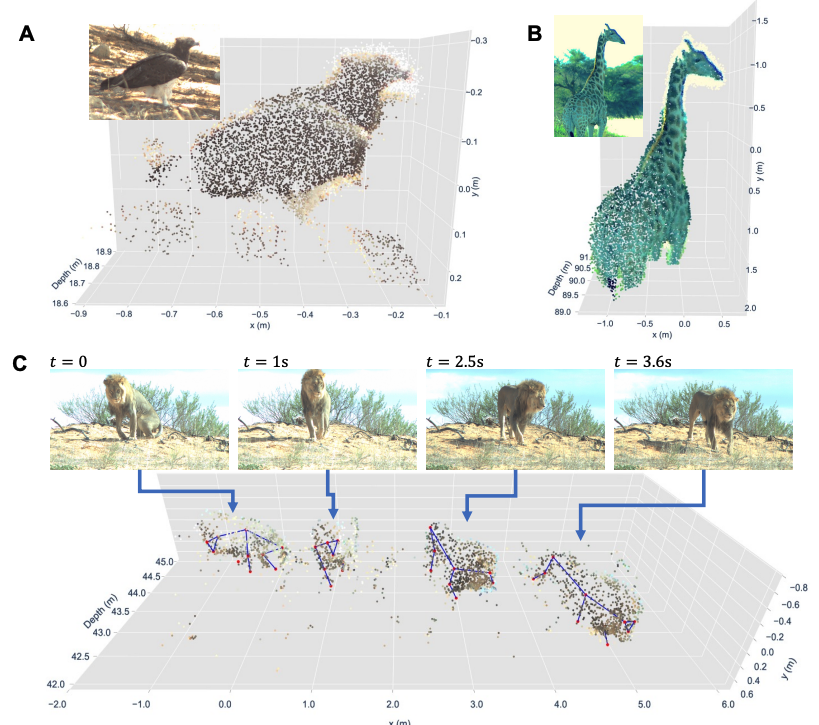

- [Mar. 2026] Our paper WildDepth on a large-scale dataset with calibrated multi-modal sensors for 3D wildlife perception and depth estimation is now available on arXiv!

- [Nov. 2025] Our survey paper Revisiting U-Net on U-Net as a foundational backbone for modern generative AI got published in Artificial Intelligence Review (Springer)!

- [Nov. 2025] Our paper Thermal-to-RGB on enhancing low-resolution thermal imagery with diffusion models for wildlife monitoring got published at ACM International Workshop on Thermal Sensing and Computing 2025!

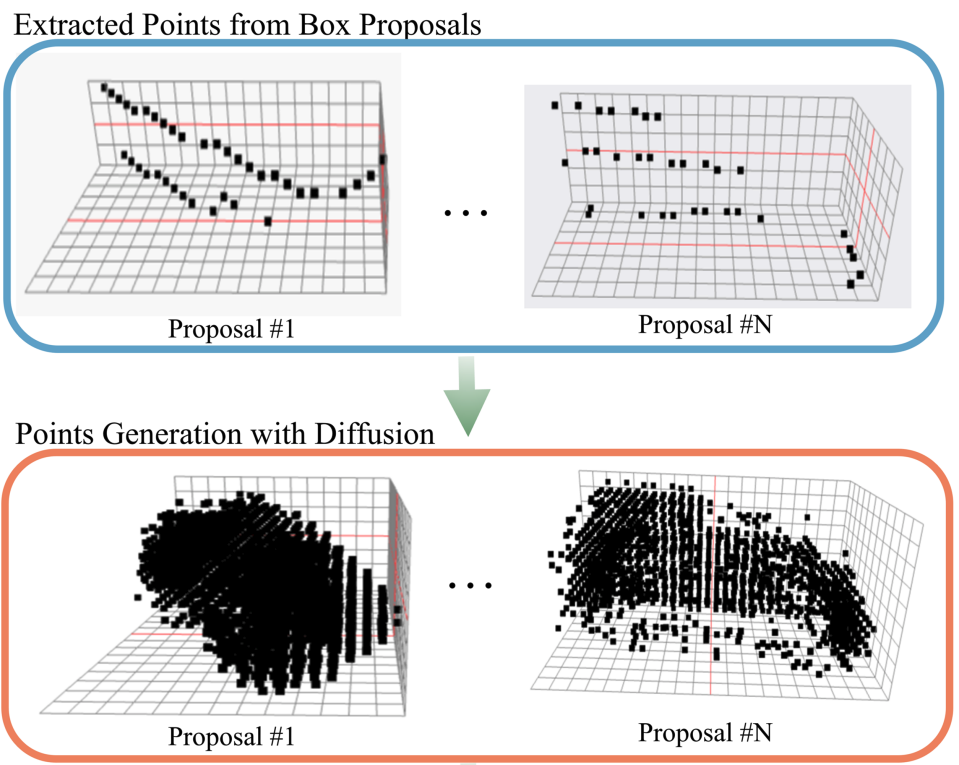

- [Jun. 2025] Our paper DiffRefine on generative cross-domain detection in 3D got accepted into ICCV 2025 for spotlight presentation!

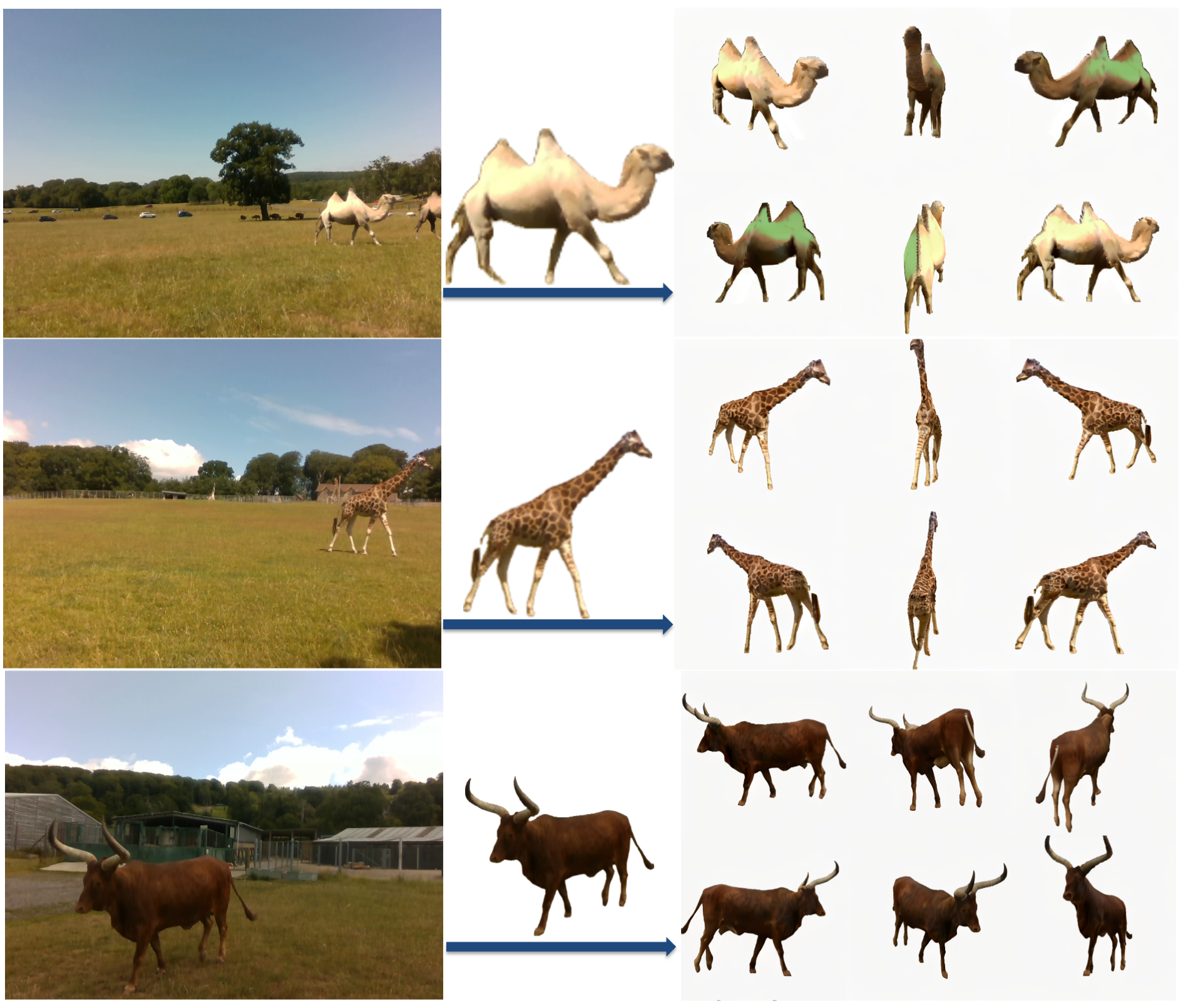

- [Mar. 2025] Our paper WildPose on multi-modal sensing dataset for deformable animals got accepted into Journal of Experimental Biology and selected as the cover of the issue!

- [Jan. 2025] Completed my thesis correction and obtained D.Phil. (PhD) status!

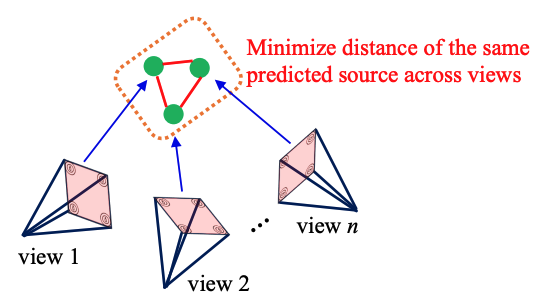

- [Jan. 2025] Our paper SoundLoc3D on sound source localization got accepted into WACV 2025 for oral presentation!

- [Oct. 2024] Started my role as a postdoctoral researcher in CPS group.

- [Oct. 2024] Successfully defended my D.Phil viva! (Internal Examiner: Prof. Christian Rupprecht at VGG, External Examiner: Prof. Dimitrios Kanoulas at UCL).

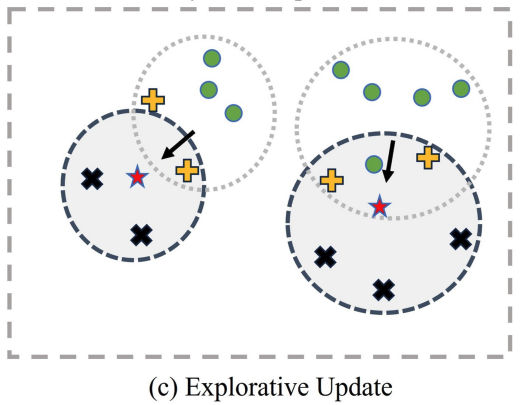

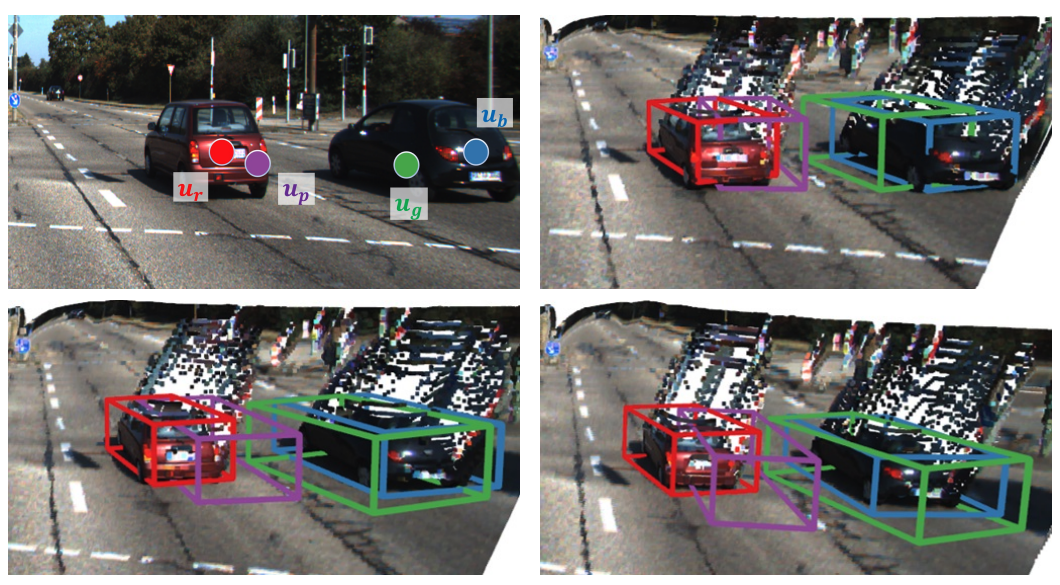

- [Sep. 2024] Our paper GroupExp-DA on Domain-Adaptive 3D Detection got accepted into NeurIPS 2024!

- [May. 2024] Our paper on stereo depth estimation with visual foundation models got accepted into ICRA 2024!

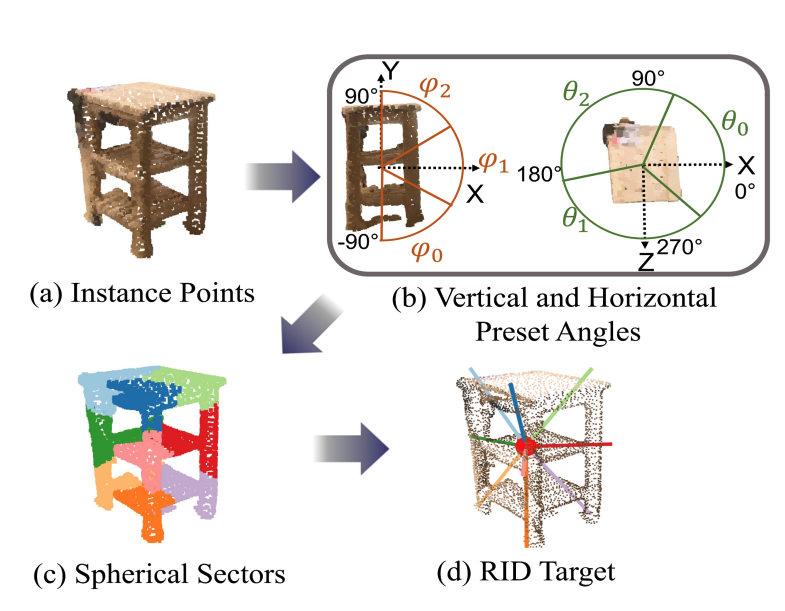

- [Mar. 2024] Our paper Spherical Mask on 3D instance segmentation got accepted into CVPR 2024!

- [Feb. 2024] Defended my Confirmation viva (Examiners: Profs. Ronald Clark and Alessandro Abate).

- [Jan. 2024] Our paper Sound3DVDet on sound source localization got accepted into WACV 2024!

- [Jul. 2023] Our paper on view synthesis with NeRF got accepted into NeurIPS 2023!

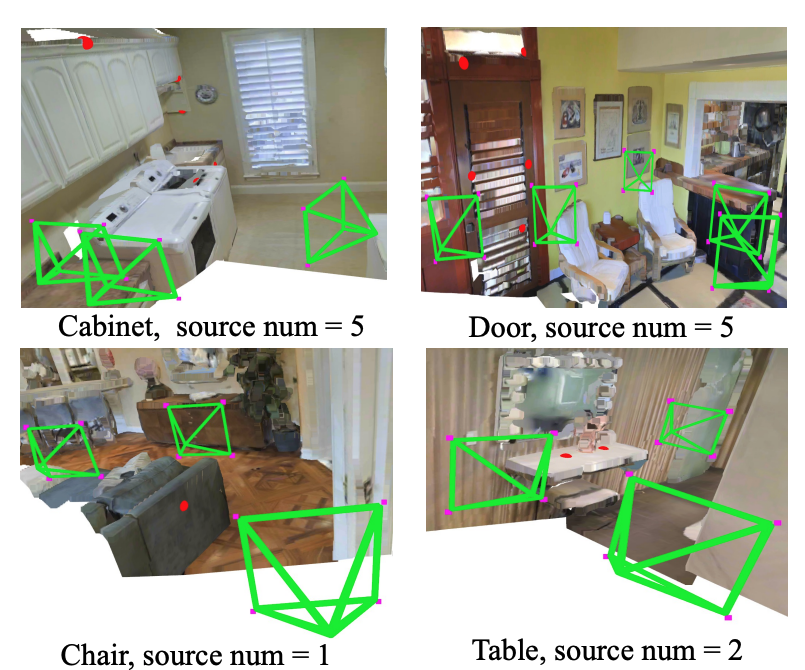

- [Jan. 2023] Our paper Sample, Crop, Track on self-supervised 3D object detection got accepted into ICRA 2023!

- [Aug. 2022] Our paper on monocular Night-time Depth Estimation got accepted into CORL 2023!

- [Mar. 2022] Our paper on monocular SLAM on UAV got accepted into IROS 2022!

- [Jan. 2022] Defended my Transfer of Status viva (Examiners: Profs. Alex Rogers and Alessandro Abate).

- [Oct. 2020] Started my D.Phil. (PhD) at the University of Oxford.

- [Aug. 2020] Completed my master's research at Sejong University, South Korea, on vision-based autonomous drone navigation — our team won 1st place at the NeurIPS 2019 Autonomous Drone Racing Competition! See our paper and demo video.

Selected Publications

Contact

Email: sangyun.shin@cs.ox.ac.uk

I'm open to opportunities, collaborations and research discussions. Feel free to reach out!